-

E-mail

dovyski@gmail.com (personal)

fernando@optidata.cloud (work) -

Address (work)

Optidata

Pilastri Office Park, Av. Nereu Ramos, 1866 E, 4º floor

Chapecó, SC, Brazil. Zip code 89805-100

This is a list of my most relevant research publications. For a complete list of all my academic activities, check my Lattes CV or my Google Scholar profile.

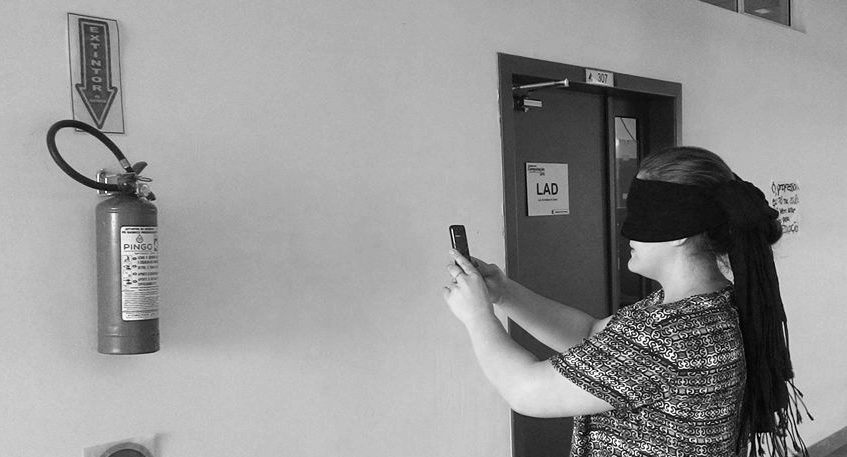

Visually impaired (VI) people face a set of challenges when trying to orient and contextualize themselves. Computer vision and mobile devices can be valuable tools to help them improve their quality of life. This work presents a tool based on computer vision and image recognition to assist VI people to better contextualize themselves indoors. The tool works as follows: user takes a picture p using a mobile application; p is sent to the server; p is compared to a database of previously taken pictures; server returns metadata of the database image that is most similar to p; finally the mobile application gives an audio feedback based on the received metadata. Similarity test among database images and p is based on the search of nearest neighbors in key points extracted from the images by SIFT descriptors. Three experiments are presented to support the feasibility of the tool. We believe our solution is a low cost, convenient approach that can leverage existing IT infrastructure, e.g. wireless networks, and does not require any physical adaptation in the environment where it will be used.

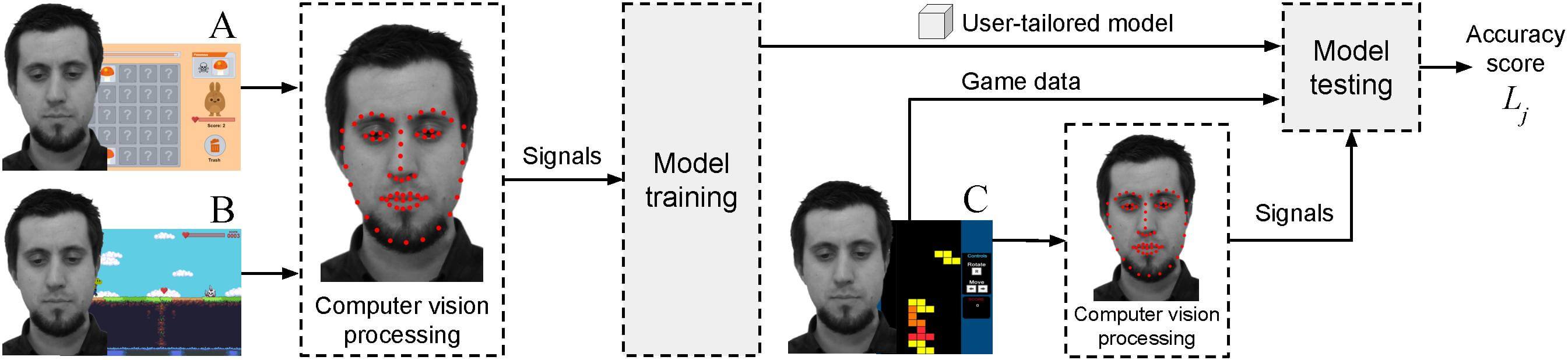

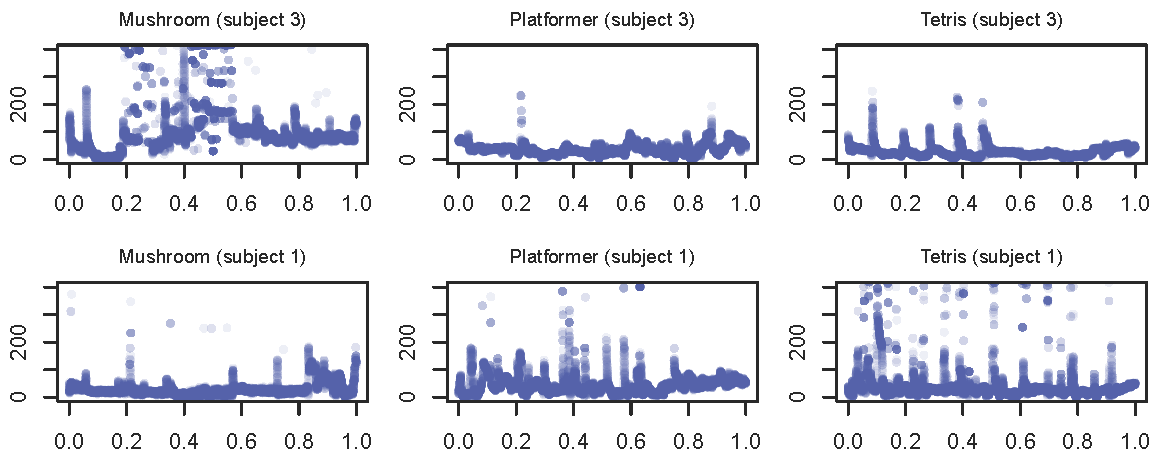

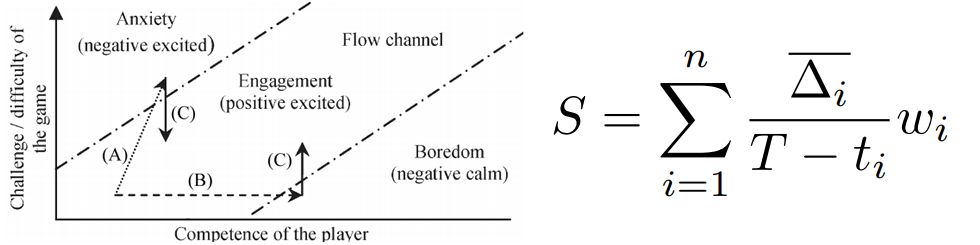

Emotion detection based on computer vision and remote extraction of user signals commonly rely on stimuli where users have a passive role with limited possibilities for interaction or emotional involvement, e.g. images and videos. Predictive models are also trained on a group level, which potentially excludes or dilutes key individualities of users. We present a non-obtrusive, multifactorial, user-tailored emotion detection method based on remotely estimated psychophysiological signals. A neural network learns the emotional profile of a user during the interaction with calibration games, a novel game-based emotion elicitation material designed to induce emotions while accounting for particularities of individuals. We evaluate our method in two experiments (N = 20 and N = 62) with mean classification accuracy of 61.6%, which is statistically significantly better than chance-level classification. Our approach and its evaluation present unique circumstances: our model is trained on one dataset (calibration games) and tested on another (evaluation game), while preserving the natural behavior of subjects and using remote acquisition of signals. Results of this study suggest our method is feasible and an initiative to move away from questionnaires and physical sensors into a non-obtrusive, remote-based solution for detecting emotions in a context involving more naturalistic user behavior and games.

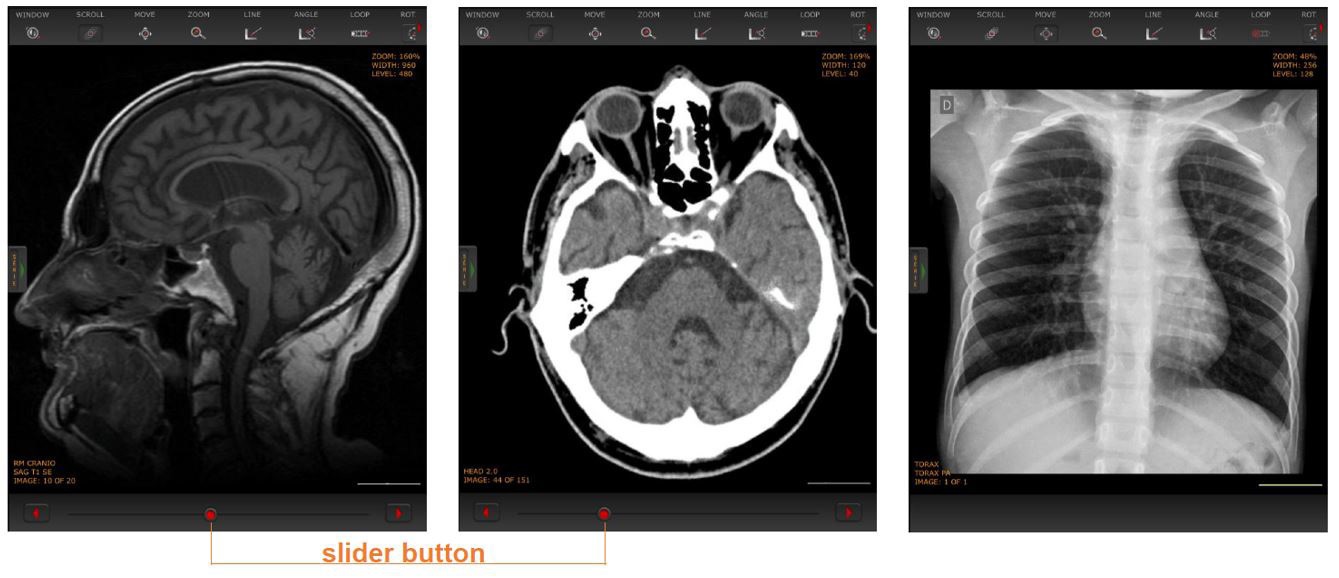

Introduction: Mobile devices and software are now available with sufficient computing power, speed and complexity to allow for real-time interpretation of radiology exams. In this paper, we perform a multivariable user study that investigates concordance of image-based diagnoses provided using mobile devices on the one hand and conventional workstations on the other hand. Methods: We performed a between-subjects task-analysis using CT, MRI and radiography datasets. Moreover, we investigated the adequacy of the screen size, image quality, usability and the availability of the tools necessary for the analysis. Radiologists, members of several teams, participated in the experiment under real work conditions. A total of 64 studies with 93 main diagnoses were analyzed. Results: Our results showed that 56 cases were classified with complete concordance (87.69%), 5 cases with almost complete concordance (7.69%) and 1 case (1.56%) with partial concordance. Only 2 studies presented discordance between the reports (3.07%). The main reason to explain the cause of those disagreements was the lack of multiplanar reconstruction tool in the mobile viewer. Screen size and image quality had no direct impact on the mobile diagnosis process. Conclusion: We concluded that for images from emergency modalities, a mobile interface provides accurate interpretation and swift response, which could benefit patients' healthcare.

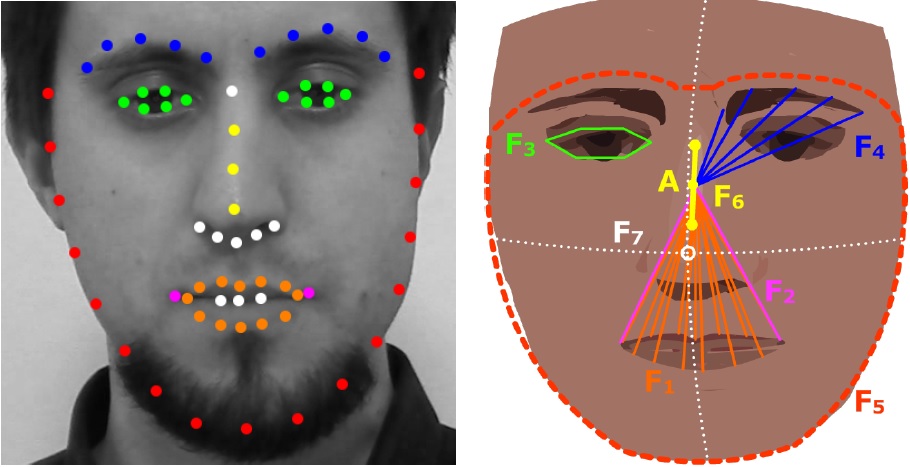

Facial analysis is a promising approach to detect emotions of players unobtrusively, however approaches are commonly evaluated in contexts not related to games, or facial cues are derived from models not designed for analysis of emotions during interactions with games. We present a method for automated analysis of facial cues from videos as a potential tool for detecting stress and boredom of players behaving naturally while playing games. Computer vision is used to automatically and unobtrusively extract 7 facial features aimed to detect the activity of a set of facial muscles. Features are mainly based on the Euclidean distance of facial landmarks and do not rely on pre-defined facial expressions, training of a model or the use of facial standards. An empirical evaluation was conducted on video recordings of an experiment involving games as emotion elicitation sources. Results show statistically significant differences in the values of facial features during boring and stressful periods of gameplay for 5 of the 7 features. We believe our approach is more user-tailored, convenient and better suited for contexts involving games.

This paper presents an experiment aimed at exploring the relation between facial actions (FA), heart rate (HR) and emotional states, particularly stress and boredom, during the interaction with games. Subjects played three custom-made games with a linear and constant progression from a boring to a stressful state, without pre-defined levels, modes or stopping conditions. Such configuration gives our experiment a novel approach for the exploration of FA and HR regarding their connection to emotional states, since we can categorize information according to the induced (and theoretically known) emotional states on a user level. The HR data was divided into segments, whose HR mean was calculated and compared in periods (boring/stressful part of the games). Additionally the 6 hours of recordings were manually analyzed and FA were annotated and categorized in the same periods. Findings show that variations of HR and FA on a group and on an individual level are different when comparing boring and stressful parts of the gaming sessions. This paper contributes information regarding variations of HR and FA in the context of games, which can potentially be used as input candidates to create user-tailored models for emotion detection with game-based emotion elicitation sources.

Remote photoplethysmography (rPPG) can be used to remotely estimate heart rate (HR) of users to infer their emotional state. However natural body movement and facial actions of users significantly impact such techniques, so their reliability within contexts involving natural behavior must be checked. We present an experiment focused on the accuracy evaluation of an established rPPG technique in a gaming context. The technique was applied to estimate the HR of subjects behaving naturally in gaming sessions whose games were carefully designed to be casual-themed, similar to off-the-shelf games and have a difficulty level that linearly progresses from a boring to a stressful state. Estimations presented mean error of 2.99 bpm and Pearson correlation r = 0.43, p < 0.001, however with significant variations among subjects. Our experiment is the first to measure the accuracy of an rPPG technique using boredom/stress-inducing casual games with subjects behaving naturally.

Introduction: A medical application running outside the workstation environment has to deal with several constraints, such as reduced available memory and low network bandwidth. The aim of this paper is to present an approach to optimize the data flow for fast image transfer and visualization on mobile devices and remote stationary devices. Methods: We use a combination of client- and server-side procedures to reduce the amount of information transferred by the application. Our approach was implemented on top of a commercial PACS and evaluated through user experiments with specialists in typical diagnosis tasks. The quality of the system outcome was measured in relation to the accumulated amount of network data transference and the amount of memory used in the host device. Besides, the system's quality of use (usability) was measured through participants’ feedback. Results: Contrarily to previous approaches, ours keeps the application within the memory constraints, minimizing data transferring whenever possible, allowing the application to run on a variety of devices. Moreover, it does that without sacrificing the user experience. Experimental data point that over 90% of the users did not notice any delays or degraded image quality, and when they did, they did not impact on the clinical decisions. Conclusion: The combined activities and orchestration of our methods allow the image viewer to run on resource-constrained environments, such as those with low network bandwidth or little available memory. These results demonstrate the ability to explore the use of mobile devices as a support tool in the medical workflow.

NOTE: Awarded best student paper of the conference.

NOTE: Awarded best student paper of the conference.

This paper presents an experiment aimed at empirically exploring the variations of facial actions (FA) during gaming sessions with induced boredom and stress. Twenty adults with different ages and gaming experiences played three games while being recorded by a video camera and monitored by a heart rate sensor. The games were carefully designed to have a linear progression from a boring to a stressful state. Self-reported answers indicate participants perceived the games as being boring at the beginning and stressful at the end. The 6 hours of recordings of all subjects were manually analyzed and FA were annotated. We annotated FA that appeared in the recordings at least twice; annotations were categorized by the period when they happened (boring/stressful part of the games) and analysed on a group and on an individual level. Group level analysis revealed that FA patterns were related to no more than 25% of the subjects. The individual level analysis revealed particular patterns for 50% of the subjects. More FA annotations were made during the stressful part of the games. We conclude that, for the context of our experiment, FA provide an unclear foundation for detection of boredom/stressful states when observed from a group level perspective, while the individual level perspective might produce more information.

A medical application running outside the workstation environment has to deal with several limitations, such as reduced available memory and low network bandwidth. Adaptations and novel approaches are required to make applications overcome such problems. This paper presents an approach that uses a combination of client-and server-side procedures to dynamically optimize the data flow for fast image transfer and visualization on mobile and stationary devices. The main goal of our approach is to minimize the amount of data transferred to and used in the host device without sacrificing the user experience. Our approach was implemented and validated using a real use case, the application Animati Viewer, which is a web visualizer for diagnostic images. The evaluation was measured using metrics such as the accumulated amount of network transferred data and the amount of memory used in the host device. The results show that our approach is feasible and, in one of our tests, it transferred only 7.73% of the amount of data downloaded by the OsiriX mobile.

The process of monitoring user emotions in serious games or human-computer interaction is usually obtrusive. The work-flow is typically based on sensors that are physically attached to the user. Sometimes those sensors completely disturb the user experience, such as finger sensors that prevent the use of keyboard/mouse. This short paper presents techniques used to remotely measure different signals produced by a person, e.g. heart rate, through the use of a camera and computer vision techniques. The analysis of a combination of such signals (multimodal input) can be used in a variety of applications such as emotion assessment and measurement of cognitive stress. We present a research proposal for measurement of player’s stress level based on a non-contact analysis of multimodal user inputs. Our main contribution is a survey of commonly used methods to remotely measure user input signals related to stress assessment.

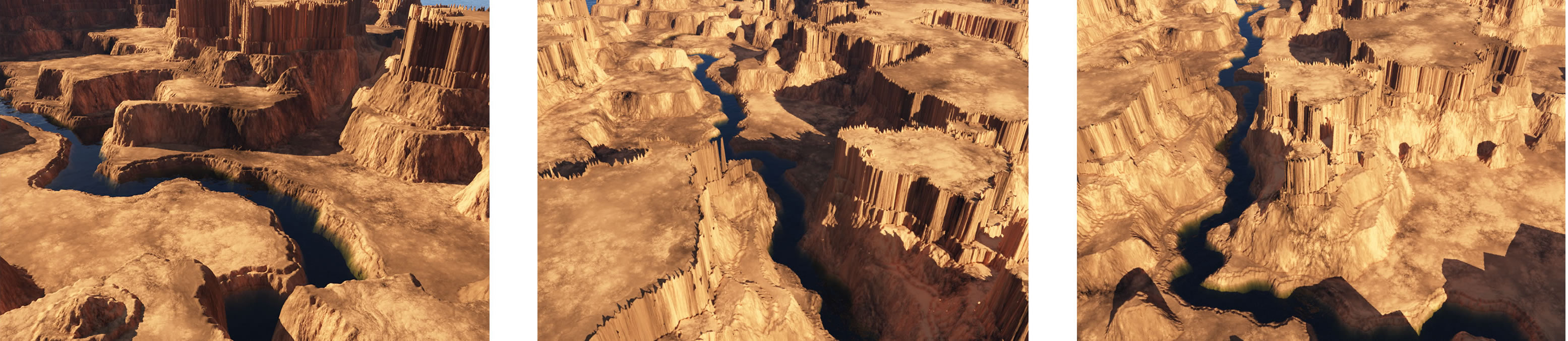

This paper presents a non-assisted method for procedural generation of 3D canyon scenes. Our approach combines techniques of computer graphics, computer vision, image processing and graph search algorithms. Our main contribution is a generation approach that uses noise-generated height maps that are carefully transformed and manipulated by data clustering (through the Mean Shift algorithm) and image operations in order to mimic the observed geological features of real canyons. Several parameters are used to guide and constrain the generation of terrains, canyon features, course and shape of rivers, plain areas, soft slope regions, cliffs and plateaus.

The development of a complex game is a time consuming task that requires a significant amount of content generation, including terrains, objects, characters, etc that requires a lot of effort from the a designing team. The quality of such content impacts the project costs and budget. One of the biggest challenges concerning the content is how to improve its details and at the same time lower the creation costs. In this context procedural content generation techniques can help to reduce the costs associated with content creation. This paper presents a survey of classical and modern techniques focused on procedural content generation suitable for game development. They can be used to produce terrains, coastlines, rivers, roads and cities. All techniques are classified as assisted (require human intervention/guidance in order to produce results) or non-assisted (require few or no human intervention/guidance to produce the desired results).

An important part of the student evolution through the computer science program is when they learn the object-oriented programing paradigm. This paper presents the preparation, execution and results of a game related assignment experimentally applied to a group of students attending the objected-oriented programming course during the first half of the second year of a computer science program. The task consisted of programming a software agent (described as a Java class) and test it in a battle environment represented by an arena. The assignment implementation is based on a tool called Jarena that was developed by the authors for the experiment. The contribution of this paper is the tool itself and the documentation of the results the authors achieved with the experiment.

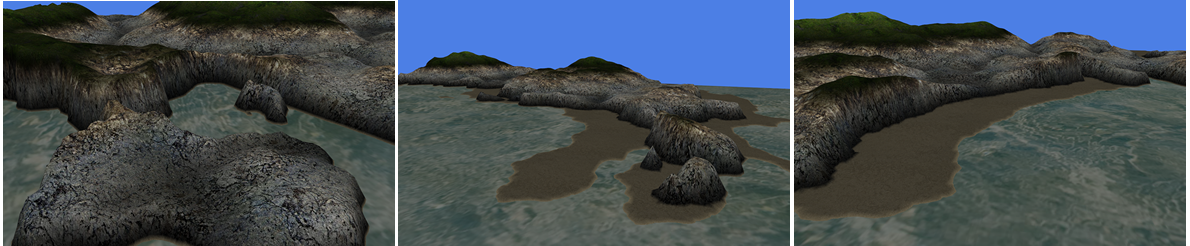

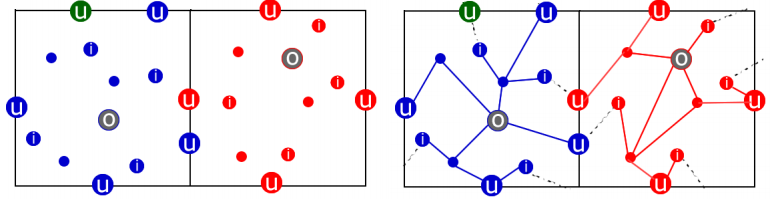

In MMO games the player’s experience is mainly influenced by the size and details of the virtual world. Technically the bigger the world is, the bigger is the time the player takes to explore all the places. This work presents a tool (named Charack) able to generate pseudo-infinite virtual worlds with different types of terrains. Using a combination of algorithms and content management methods, Charack is able to create beaches, islands, bays and coastlines that imitates real world landscapes. The tool clearly distinguish the generation of each type of content. The contribution of the tool is the ability to generate arbitrarily large pieces of land (or landscape) focusing on detailed coastline generation, by means of using procedural algorithms.

We present a proposal of a tool for pseudo-infinite 3D virtual world generation. The main idea is to split up the problem of generating a virtual world in three main steps: the first one is to generate the paths, the second one is to generate the terrain and the last one is to generate the world elements, like grass over the fields, trees, rocks and plants, for example. The world is divided in cells and only the ones that are visible by the user are kept in memory. To reach this goal, many techniques will be used, like height maps, real-time cities generation, terrain texturing and path-planning.

NOTICE: you can read a more condensed version of this research by reading the final manuscript of the thesis. It is open access.

My master’s dissertation: Ferramenta para Geração de Mundos Virtuais Pseudo-Infinitos para Jogos 3D MMO. 2009. [PDF, in Brazilian portuguese].

BRANCHER, J. D. ; BEVILACQUA, F. ; FERREIRA, N. B. ; IOP, R. D. ; MINUZZI, L. ; FERREIRA, T. K. . Um Portal de Jogos Casuais Educativos. Jogos Eletrônicos: Mapeando Novas Perspectivas. 1ed.Florianópolis, SC: VISUAL BOOKS, 2009, v. 1, p. 197-210.